I have extensive experience with Graylog, Kibana (ELK), and Splunk. Having managed production clusters ingesting hundreds of gigabytes per day in them all, at my current and previous companies.

Why the three of them? This depends on what was already in place, if anything, and it’s always good to have competitors when negotiating software license.

Both Graylog and Kibana relies on the ElasticSearch database for storage, so we’ll talk about ElasticSearch too.

They’re 90% of the same

Before we start, you should know that they are 90% of the same. They ingest logs and allow to search and visualize said logs.

If there is one remarkable thing from managing the three of them extensively, it’s that they all work pretty well and they scale.

It’s extremely rare to face multiple competing software that are all good. In fact I can’t think of any software trial I’ve done where all the products worked well.

Pricing

First things first. They can all be expensive.

Splunk is well-known as the most expensive off-the-shelf software in the world (Yes, it’s putting Oracle to shame). To give an order of magnitude at one okace many years ago: 800 physical hosts, $12M a year for Splunk (really hope they renegotiated their contract).

Splunk can charge per GB (this gets really punitive the more your company grows), or per node, or “unlimited” for the enterprise plan.

While it’s temping to run away to the main competitor (namely ELK: ElasticSearch Kibana Logstash), the competition is not cheap either. They claim to be open source but they are neither open source nor free.

ElasticSearch and Kibana notably leave all authentication capabilities to the enterprise edition. If you want any authentication, which all enterprise do because regulations, then you’re going to pay up. (Graylog doesn’t put all features behind paywall but that may change). *EDIT: Free ELK got authentication after version 6.8 (end 2019), this was finally backported to the free edition after years of repeated backslash and data breach.*

All things considered, all solutions will cost a sizable amount, besides, hardware to run on doesn’t come cheap. Centralized logging is a MUST HAVE in every company so they will eventually pay for the enterprise plan or leave access wide open.

It requires serious tooling to manage hundreds of hosts and applications in a company: centralized logging, monitoring, IDE, CI/CD, profilers, etc… Logging and monitoring is an integral part of infrastructure and it is perfectly normal to spend 10% there. It’s really not possible to operate stably and efficiently without it, though not all companies care about efficiency or stability.

Most developers dread to pay for software, let alone talk to sales, yet it has to be done when running a company. Don’t be afraid to acquire software. Get quotes from all sides and compare them against each other.

Note that Splunk has been cutting a zero off their bill since ELK has entered the landscape aggressively, you’re probably leaving a ton of money off the table if you haven’t renegotiated your contracts in the past years.

Storage and Scaling

Splunk and ElasticSearch storage scale extremely well. They can take a hundred gigabytes a day with barely any tuning.

Out of all the databases I have worked with in my life, ElasticSearch is most certainly THE easiest to setup and to scale (ingesting a TB a day is easy with a bit of tuning and appropriate hardware). It is truly incredible.

The real challenge is usually to obtain hardware, minimum 3 or 5 nodes with terabytes of disks. Older companies are stuck with absurd procurement processes taking a year to get one machine. This can be a blocker to roll out centralizing logging, it’s really a project that is depending on having hardware resources, a large amount of resources.

Performance Issue in Splunk

Now the dark side of Splunk. The performance in search is abysmal. It basically can’t find more than 100k/results a seconds

Let’s say I am running a search to show web requests per hour over the past week. There are 300 millions of those so the chart will take an hour to gather all the events and complete. It’s basically unusable (cherry on top: getting logged out for inactivity after 15 minutes, forcing to restart the search).

On the other hand ElasticSearch (Graylog and Kibana) can complete the same search just fine in a couple seconds. It can truly aggregate a billion logs as if it were nothing. Magic!

Now, if adding a filter to restrict the Splunk search to web requests on path "/something/*", there is only 1 million of them. Splunk is able to display the chart fast enough. It can actually filter through billions of events extremely quickly, however it cannot return the results quickly (cap about 100k results/s). Profiling indicates it’s taking forever transferring data between nodes and/or computing all sort of statistics.

It’s a big problem with Splunk, it’s painful if not impossible to work with large amount of events (there are quick filters to sample 1:100 or 1:1000 of results otherwise the tool would be plain unusable).

On the other hand, Kibana (ElasticSearch) can always search and return incredibly quickly. The actual trick is that it doesn’t gather the results, only the first 500 results, and it doesn’t count the results for real, only does an estimate (see HyperLogLog and Counting in ElasticSearch).

If you are interested in high performance data structure and algorithms, HyperLogLog, Bloom Filter and murmurhash are novelties responsible for significant performance improvements in this decade. Definitely worth reading about.

Note to Splunk Corp: It is a miracle that Splunk is not bleeding customers given how horribly slow it is. Highly suggest to improve performance, maybe make a mode to count events without collecting their contents, just like what ELK does out-of-the-box.

Join Support or Lack Thereof

Splunk has amazing support for join and transformations. Here’s a few samples of what you can do.

type=mysql | rex field=message "slow query from (?[^ ]+) took (?[^ ]+) seconds"

This can extract fields from database text logs like "slow query from 1.2.3.4 took 6.5 seconds". The extracted fields (source and duration) can be used as if they were fields from the event, to make charts or filter some more.

app=sendmail userid | transaction uid startswith="eventtype=login" endswith="eventtype=logout" maxspan=10m maxpause=10m

This can find events from sendmail and group them per userid as if they were continuous events. It allows to recreate transactions, effectively chains of related events.

"app=sshd | lookup inventory.csv on host_fqdn"

This can find logs from ssh and enrich them with information about the host, taken from the csv file. Reference data (server inventory, employees information) can be stored in Splunk and significantly enrich what information are available.

ElasticSearch (Kibana and Graylog) has zero support for join. They can’t join or transform a thing.

If you’ve got some logs "new user registered user@example.org", you’re toast to extract the email. Gotta change the application to send structured logs or pass events through a grok processor (regex extractors in the logging pipeline).

Same thing if a field is number but formatted as a string, bringing the next subject.

Catastrophic Typing in ElasticSearch

Assume structured logging, for example logs coming out of a webserver {request_method:GET request_path:/something request_status:200 request_duration:0.5}.

The concept of structure is fundamental, having structured fields unlocks the full power of centralized logging: searching, filtering, charting, aggregating, statistics, and combining, on anything.

Fields are typed: number, string, array, object. When working with ElasticSearch (Kibana and Graylog), the type of a field can be detected and configured automatically. There is an administration page to view and modify the mappings.

Which bring us to maybe THE most catastrophic issue in ElasticSearch. The quickest way to crash an ElasticSearch cluster is to accidentally log events with a a conflicting field, try sending {"pid": 12345} and {"pid": "12345"} in an infinite loop. ElasticSearch decides on the field type when a field is ingested for the first time and it will reject any subsequent message that doesn’t match.

It’s a nightmare from an operation perspective because it drops logs for seemingly no reason and it can crash the cluster. Any field with an unexpected type prevents the whole event from being ingested by ElasticSearch. The node logs a long error message by default on failing to ingest an invent, filling the local disk very quickly and crashing the node out-of-disk.

There is no known cure for this. The problem is forever looming around and it’s impossible to ensure that no two applications would ever use one field with distinct content.

Which leads to deeper practical issues. Typing determines what operations can be performed, sum(duration) or duration < 1.0 requires the field to be a number.

It’s problematic with software having loosely typed logs, one major example is apache/nginx giving "response_time_ms=1234" on a successful request or possibly "response_time_ms=-" on a failed request. The response time here is inconsistent. The quick workaround is to format it as a string, because string can store all possible values, but a string can’t be used like a number (app:web AND response_time_ms > 30.0).

Corollary: Kibana has some charting capability -similar to metrics- but it fails more often than not due to the number not being exactly a number.

Back to Splunk now. Splunk can ingest and chart ANYTHING thrown at it. It’s magical.

Doesn’t matter what fields are or if they change between every event, everything just work all the time in Splunk (sum, division, smaller than comparison, etc…).

I suppose it does conversions automatically, no idea. Any software that do work well is indistinguishable from magic, so let’s just call Splunk magic!

People often ask me what’s the differences between Splunk/Kibana/Graylog/ElasticSearch/other?

This typing limitation is the most noteworthy difference (along with join). It will stop companies trying to migrate away from Splunk dead in their track, because there are 99% chance it’s been was ingesting variable data over the years, that ELK won’t be able to ingest. The solution might involve fixing tens of disparate data source across the organization, a difficult and time consuming endeavor.

Note to Elastic Corp: To assist customers in their migration. Suggest to improve typing issues. Being able to forcefully convert a field to a chosen type, with a default value if invalid, would go a long way.

The Kibana UI is dreadful

No article on Kibana can be complete without mentioning the atrocity that is the Kibana UI. The most unintuitive user experience in the observable universe (git might compete for the position if it had a UI).

Don’t get me wrong, it is fully functional, in the same sense that a camel is a fully functional horse.

It can be used with a lot of practice and googling, once the search syntax is figured out, if ever. Rarely have I seen a developer able to use Kibana without handholding.

Graylog is easy, put “app=myapp” in the search bar and it’s finding things. Splunk is easy, similar.

Kibana is not like that. The search syntax is obtuse, the above string would find something but not quite the right thing, so it looks okay but it is not. There are numerous complicated symbols and escaping rules, like "http_path:\/api\/my-api\/*", remember to escape forward slash and hyphen.

The syntax should be easy enough to figure on the fly. After a search, there are buttons to filter further on the events. (Click “+” on the hostname and it’s going to adjust the search "... AND hostname:uk\-123\.company\.com"), except that Kibana doesn’t do that unlike every other tool. user actions don’t modify the search string, preferring to add entirely new UI elements that combine to form the search so you can never figure out what the actual search syntax is.

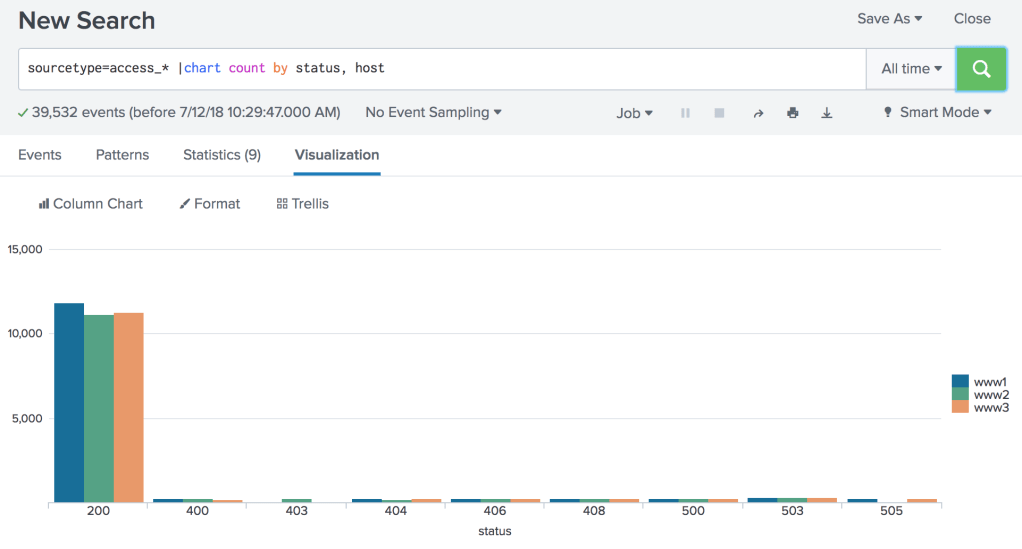

Now onto charts and dashboards. A chart in Splunk can be derived from any query "app=web | timechart count() by hostname span=24h". See the timechart statement. It’s trivial to pop charts out of anything anytime. Once you start doing it, you can’t live without it.

Doing the same chart in Kibana requires a minimum of 12 clicks, involving intricate menus and submenus and submenus of submenus. It is a nightmare..

Not sure about Graylog, charting was easy but very limited last I was using it, there should more functionality in the newer releases.

Conclusion

I think I covered everything major. Choice is open between:

- Splunk, a tool with incredible joining and charting capability, that is dog slow and cost a fortune.

- ElasticSearch (both Kibana and Graylog), a lightning fast distributed database, that can’t do a join and might crash if a message is a string or is not a string.

- Kibana, a functional but unintuitive search interface that gets the job done with enough practice, good charting more so when data happens to be in the right format.

- Graylog, a tool in between, a simple user interface with limited charting capabilities (not sure about the latest versions), that does not hide all essentials features behind a paywall.

They are all solid tools in spite of their respective weakness.

“The most intuitive user experience in the observable universe”

Don’t you mean “unintuitive”?

LikeLike

Yes, thanks!

Looks like my spell checker autocorrected all the “unintuitive”, not accepting it as a word.

LikeLike

Thanks for this article. I’ve always wondered what the deal was with splunk (never used it, only heard from it).

Nice comparison with kibana. I’ve used it often and eventually got around it’s issues, but I guess I never realized how bad it really was. Probably because I never knew how pleasant splunk & co could display that data.

LikeLike

It’s day and night in usability, especially for charts.

You really have to try Splunk if you can get a chance. It’s magical how you can chart anything and it’s so easy. Words can’t capture the experience.

LikeLike

This covers a lot of the finer points, good stuff. Although sounds like you weren’t using accelerated data models with Splunk? For a minor increase in storage you can literally (and not figuratively literally, literally literally) gain a 100-200x increase in speed VS searching on raw logs. For any alerting or dashboards it’s a must, and Splunk professional services fails to mention this regularly.

As much as I love open source, the lack of eval/transform/regex capabilities makes me sad. There are some plugins to hodgepodge *some* of that in, but they regularly break with updates.

LikeLike

I am aware of the report and data accelerators. Never managed to get anything out of them:

1) They require to edit splunk configuration files on the splunk nodes. That’s a non starter because users don’t have access to splunk servers in the company.

2) They have to be configured per search/report/field/index, quite the amount of configuration (and not trivial one at that). That’s a non starter because users are not gonna raise a splunk reconfiguration request to a sysadmin whenever they want to create a fancy report or ingest more data.

Basically it’s plain unusable in any work environment (different people operating Splunk and running queries).

LikeLike

Yikes! I thought the communication between our Splunk teams was bad, but if you can’t use a 100-200x increase in efficiency to justify and accelerate a business use case then it sounds like a people problem more than a tech problem. You can edit data models in the GUI with the right privileges and they don’t have to be configured per search/report/field/index, once the datamodel has data from any sourcetype/index then you can play with the data any which way with tstats. An absolute must for dashboards, reports, and saved searches.

Sending well wishes, prayers, and good luck to any team who can’t manage to get their business / admins on board with something so essential to a successful Splunk instance. (/¯◡ ‿ ◡)/¯

LikeLike

We can agree that it’s both a human and a tech problem 😉

Splunk is enterprise and enterprise means segregated departments with tight access control. I don’t think it’s easy to get Splunk admin access or SSH access in most organizations.

If the product is unusable (it really is) except for an obscure magic flag, then it ought to be fixed out of the box (100 times speedup is the bare minimum to make it usable, running queries at 1:1000 or 1:10000 sampling is not infrequent).

LikeLike

the search query makes all the difference.

for example, if we just need the number of events, a stats count search is pretty fast (much faster than 100k/sec), and a tstats search is about 10x+ faster as well. (and recently, theres auto conversion of stats –> tstats searches!)

see this great set of slides: https://conf.splunk.com/files/2017/slides/searching-fast-how-to-start-using-tstats-and-other-acceleration-techniques.pdf

LikeLike

First thanks for the excellent comparison.

Data models are a way to speed up Splunk a lot, but in my experience they are used seldom for various reasons.

– It is a trade off between throwing compute and I/O power at it in advance to maintain the data models, or to use the compute and I/O power during search. The team running the Splunk infrastructure is usually not keen on throwing compute power at a problem in advance, so they don’t push it with the argument why keep a fast data-model if it is only searched once in a week. And the additional load can be significant, we had enabled data models for Splunk ES in a 100 node indexer cluster, this increased the load on the nodes by 20%.

– It needs some planing and gives more work during the on boarding process of data sources.

– It’s not well understood by most people.

I also agree that the communication between the search heads and the indexers for the map-reduce process is not awful fast.

There are ways to speed up your searches by filtering the results early in the pipeline, using the tsindex, create base searches and use them like a data cube and so on. For this you have to know how Splunk operates internally. There are a few excellent guides, search for speedup Splunk Searches.

LikeLike