Let’s store 10 million users in a LDAP. It is possible? If so, what hardware does it take?

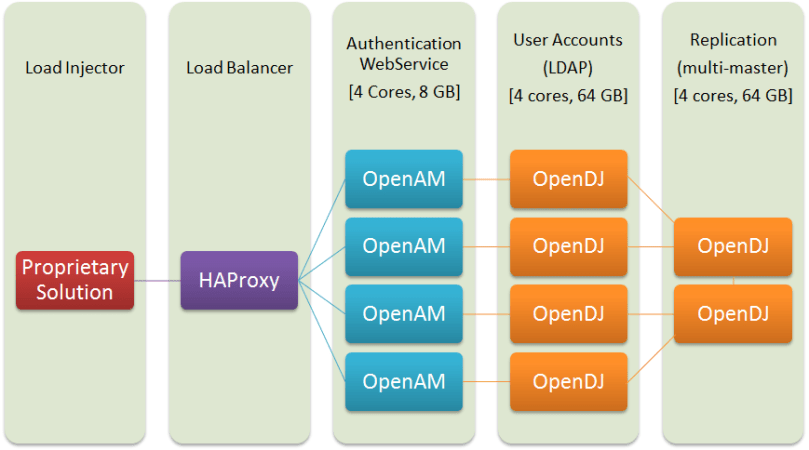

Benchmark Setup

Test Scenario

Same scenario and procedure as the previous article comparing LDAP servers with 100k users accounts.

Hardware

One rectangle is one server. Lines represent connections.

Load testing is done using the OpenAM REST API.

The load injector is a distributed proprietary solution we pay a lot of money for. It could use a full diagram on its own.

Software and Configuration

- CentOS 6.7

- Oracle JDK 7 (special edition)

- OpenDJ 2.6

- OpenAM 12 (tomcat7)

We have a special package of the Oracle JDK given by Oracle. Custom stuff and bugfixes before they get to the general public. We’re a Java shop with big critical projects and that’s part of the advantages we get with the support contract.

All applications are tuned for maximum performances:

- HAProxy: leastconn balancing, persistent sessions with cookies, 10 Gb networking, up to 16 000 HTTP requests/s during this benchmark

- OpenAM: disable debug logs, log level info, LDAP connections pool = 65, tomcat thread pool = 400, JVM Heap = 4GB

- OpenDJ: log level info, multi-master replication, memory for database caching = 80%, JVM heap = 50 GB

We spent a lot of time to tune the JVM settings. Evaluate the ConcMarkSweep against the G1 Garbage Collector, the effects of generation sizes and settings. That was an utter waste of time. It barely has any influence on the applications (+- 1%).

A bit of history: OpenDJ was originally developed by Sun, who invented Java and the JVM. The story is that used internally OpenDJ as a benchmark during the development of the G1 garbage collector. This is the latest java garbage collector recommended when using more than 8 cores and 30 GB memory.

A Word About OpenDJ Replication

OpenDJ supports master-master replication. Any instance can be written to and changes are propagated to other instances.

Master-slave replication is not supported and will never be. [It’s never needed anyway].

“This is a worldwide deployment with many directory services in 4 regions and 8 replication services fully connected. Each directory service is connected to a single replication server, but can failover in matter of seconds, by priority in the same region.”

Source: visualizing the opendj replication topology, Ludovic Poitou Blog, ForgeRock Product Manager

There are dedicated directory nodes to store user accounts (outer circle) and dedicated replication nodes to handle replication (inner circle). This architecture is scalable and it’s intended to scale to multiple datacenters, worldwide. For this benchmark we’re using a single site with 4 directory nodes and 2 replication nodes.

There is a common myth saying that LDAP replication sucks. To be accurate, it is only the OpenLDAP replication (and ApacheDS) that is poorly done and buggy. The OpenDJ replication is well designed and working flawlessly.

There are very few databases in existence supporting worldwide multi-site replication, let alone intended for critical production environment. OpenDJ is part of the elite few along Cassandra from Facebook plus BigTable and Spanner from Google.

Tests Results

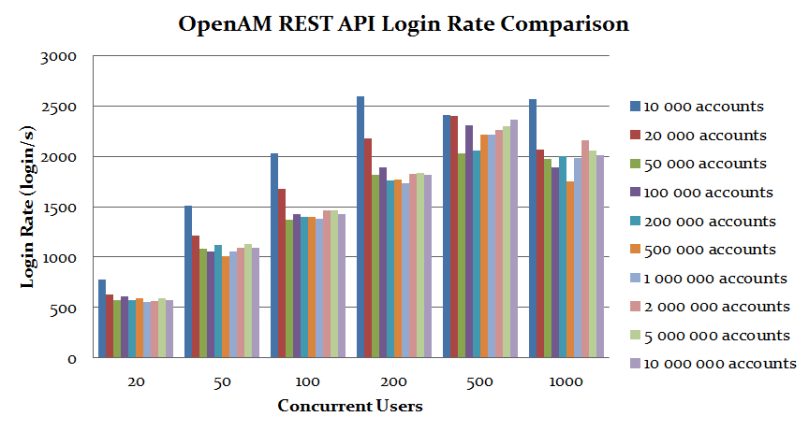

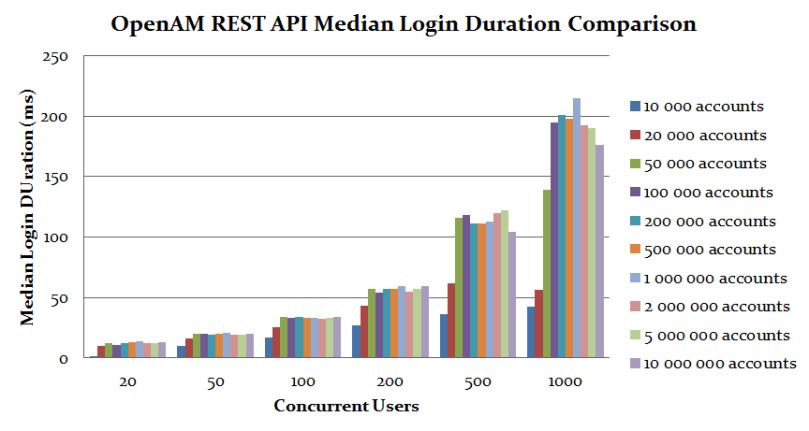

Performances

An OpenAM server will only connect to one OpenDJ server at any time. This current setup is a simple architecture with the same amount of OpenAM and OpenDJ servers, with the connection affinity configured to group them in pairs.

The bottleneck here is the CPU usage on OpenAM. The tests are pushing OpenAM servers to constant 100% CPU usage while OpenDJ servers are running at 30%.

We could EITHER double the amount of cores on all the OpenAM servers to double the throughput (for a slightly increased latency) OR get the same performances results with only two OpenDJ directories.

OpenDJ Memory and Disk Consumption

Disk usage increases linearly with the number of user accounts. The official ForgeRock recommendation is to set OpenDJ heap to [at least] twice the size of the on-disk database to be able to cache everything.

OpenAM Memory Per Session

This is an estimation of the memory usage per opened session. There is no way to measure it directly with confidence, this is a high bound obtained by averaging different memory metrics across multiple OpenAM instances over a few test runs.

The high peak at the beginning is because we can’t distinguish properly memory used by sessions and general server memory usage, having only a few sessions at the start gives an overrated average. The peak at the end is because the JVM is optimizing and reclaiming memory aggressively when the process is close to its maximum heap allowance.

One sessions requires ~ 15k of memory. This is consistent with the official ForgeRock recommendation to allocate 3GB memory heap to OpenAM for 100 000 sessions. For more sessions it’s recommended to scale horizontally with each instance having 100 000 sessions or less.

Conclusion

There is a boost in performances under 50 000 user accounts. Afterwards, performances are flat no matter the amount of user accounts. Thus performances is not linked to the amount of user accounts. Who would have guessed?

OpenDJ and OpenAM were flawless during all stress tests. No bug, no crash, no error, no comment to be done.

When you want to store user accounts in a LDAP and authenticate through OpenID Connect & SAML with optional 2 factor authentication, for 10 million users, across the globe. OpenAM with OpenDJ is the go-to solution. In fact it’s the only solution available on the planet to support all of this.

Great post! I have a question, did you put all the 10 million users into one single OpenDJ and enabled a replication with 104 OpenDJ servers? Or the diagram is just an example for OpenDJ replication?

LikeLike

The picture is an illustration from the official documentation.

The tests are done with 4 data nodes and 2 replication nodes, as shown in the first schema in the article.

LikeLike

Thanks for the reply. It is pretty amazing to see an OpenDJ with 50GB JVM to hold 10M users!

LikeLike

Has anyone tested the maximum number of Directory Server that can be connected to a Replica Server. I am having a issue where I cannot have more than 128 DS connected to a single RS.

LikeLike